Tag: Disney

-

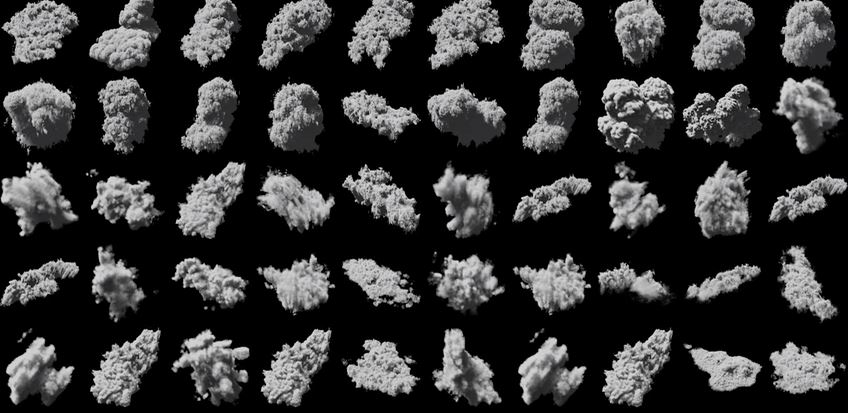

Disney Releases Production Assets for R&D and Education

Disney Animation has released two production assets to be used in computer graphics research and software development or education. The first data set is a realistic cloud that could be used for example in volume rendering research. The large and highly detailed…

-

Disney’s Practical Guide to Path Tracing

Path tracing is a method for generating digital images by simulating how light would interact with objects in a virtual world. The path of light is traced by shooting rays (line segments) into the scene and tracking them as they…

-

Disney’s AI Learns To Render Clouds

We present a technique for efficiently synthesizing images of atmospheric clouds using a combination of Monte Carlo integration and neural networks. The intricacies of Lorenz-Mie scattering and the high albedo of cloud-forming aerosols make rendering of clouds–

-

Simulation-Ready Hair Capture by Disney

Physical simulation has long been the approach of choice for generating realistic hair animations in CG. A constant drawback of simulation, however, is the necessity to manually set the physical parameters of the simulation model in order to get…